In today's data-driven world, AI isn't just on the map - it's the fast lane. From mining critical insights and tracking regulatory shifts to forecasting risk, monitoring employee health and safety metrics, and streamlining reporting, AI is steering the future of EHS, sustainability, and compliance. But as professionals take the wheel, what roadblocks and green lights lie ahead on the journey? This series of articles will help you navigate the future of AI in EHS.

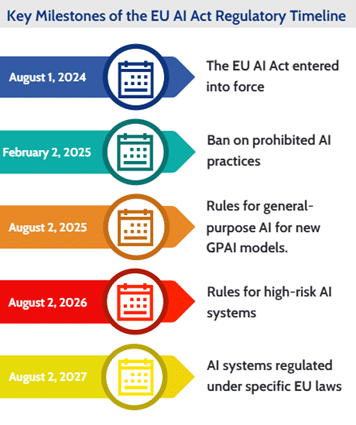

Europe has always been at the forefront of regulatory affairs, so it's no surprise that back in 2021, the European Parliament touted the preparation of the current Regulation (EU) 2024/1689 Artificial Intelligence Act (EU AI ACT) as the world's first. These days, the European Committee for Standardization is drafting frameworks to rein in artificial intelligence. Yet even for regulation-savvy Europe, lawmakers are still scrambling to catch up, because AI has burst into our lives rapidly and is evolving at a breakneck speed.

As a result, regulators need to manage many variables at once and also face quick changes. They are being forced to narrow their focus to specific areas, such as safety concerns.

There are indeed many issues to solve with AI: copyright problems, questions of responsibilities, and more, but Europe made a bold move. As part of the EU digital strategy, the European Commission proposed the first EU artificial intelligence law in April 2021, a risk-based AI classification. Artificial intelligence systems that can be used in different applications must be analyzed and classified according to the risk they pose to users. The four different risk levels are paired with AI compliance requirements that must be strictly implemented. In the meantime, the systems also should remain under human oversight rather than automated controls to avoid unintended or harmful outcomes.

At first glance, it may not seem too much. Let's be honest: we desperately need safe and well-regulated artificial intelligence, which can create many benefits for us, such as better healthcare and workplace environments, more efficient manufacturing, and more sustainable energy. On the other hand, there are growing malicious AI frauds and fake videos, which are unfortunately also part of the story. Regulators have no choice but to keep pace with booming artificial intelligence trends.

EU AI Act Sets Tiered Rules Based on Risk Levels

The outcome of years of debate introduced a tiered framework that places obligations on providers depending on the level of risk they pose to users of their AI systems. While most AI applications are considered low risk, all will be subject to assessment.

Banned Practices

According to the new rules, applications deemed to pose “unacceptable risk” are prohibited in the EU. These include cognitive behavioral manipulation, such as voice-enabled children's toys encouraging dangerous actions; social scoring systems that rank people by behavior or their socioeconomic status; and biometric identification and categorization, including real-time facial recognition in public spaces.

Limited exemptions are likely for law enforcement. Real-time biometric surveillance may be permitted in narrowly defined, serious cases, while delayed biometric identification could be used in prosecuting serious crimes, subject to prior court approval.

High-Risk Systems

AI deemed to affect safety or fundamental rights falls into the “high risk” category, with the following two main groups:

- Systems embedded in products already covered by EU product safety legislation, such as toys, cars, aviation, lifts, and medical devices, etc.

- Standalone systems in sensitive sectors, including critical infrastructure, education, employment, access to essential services, law enforcement, migration and border control, and legal decision-making.

All high-risk systems must be registered in an EU database, undergo assessment before market entry, and remain subject to monitoring throughout their life cycles. Users will be able to lodge complaints with national authorities.

Limited/Low Risk: Transparency and General-Purpose Models

AI systems generating or manipulating image, audio, video content, and chatbots are permitted with minimal transparency requirements, which basically means that they must alert users that they are interacting with AI. That would allow them to decide whether they want to continue using the systems or not.

These transparency requirements include:

- Making clear that the content is generated by AI.

- Designing the model to prevent it from generating illegal content.

- Publishing summaries of copyrighted data used for training.

AI tools, such as ChatGPT, are permitted with no obligations, but the EU AI Act and the EU AI Office urge providers to create and implement voluntary codes of practice.

EU Seeks to Boost Innovation and Support AI Startups

The EU AI Act is not only about curbing risks - it also aims to spur innovation and strengthen Europe's position in artificial intelligence. The legislation allows companies to develop and test general-purpose AI models ahead of public release, with the goal of giving startups and small businesses room to grow.

National authorities will be required to provide “regulatory sandboxes,” testing environments that replicate real-world conditions. Lawmakers say these will help small- and medium-sized enterprises compete more effectively in the fast-expanding European AI market.

Enforcement and Implementation Oversight

Besides the requirements found in the regulation, operators must take all measures necessary to ensure that the guidelines issued by the European Commission are properly and effectively implemented. To prevent violation of these measures, member states need to adopt rules on penalties and other enforcement tools, which may also include warnings and non-monetary measures. Prohibited AI practices could be fined up to €40 million or up to 7% of the company’s global annual turnover.

The European Commission's newly established AI Office is tasked with clarifying provisions of the act and guiding national regulators.

Europe's Standards Organization Gears Up to Back AI Act

Europe's push to regulate artificial intelligence is being matched with a sweeping standard-setting effort. The European Committee for Standardization (CEN) and the European Committee for Electrotechnical Standardization (CENELEC) joint Technical Committee 21 (JTC 21), launched in June 2021, brings together more than 300 experts from over 20 countries to draft harmonized AI standards that will underpin the EU's AI Act.

Mandate and Role – The JTC 21 committee's mission is to shape standards that reflect Europe's market and societal needs, align with EU policy goals, and provide practical tools for legal compliance under the AI Act. It also coordinates with other technical committees and integrates international standards where appropriate.

Supporting the AI Act – The European Commission has formally tasked JTC 21 with developing harmonized standards that, once published in the EU's Official Journal, will give companies a “presumption of conformity” with the law. These standards will act as a compliance playbook, covering risk management, transparency, human oversight, cybersecurity, and quality assurance in the following areas:

- AI Trustworthiness Framework

- AI Risk Management to address operational risks

- AI Quality Management Systems for development processes

- AI Conformity Assessment to verify compliance

Further work is focusing on datasets, bias, computer vision, cybersecurity, robustness, logging, and natural language processing - areas seen as crucial for implementing the EU's risk-based regime.

The committee's full work program is available through the EU's eNorm platform.

Related Resources

News

News

News

News